Google claims its shady data collection protects us from ‘cognitive overload’

Google has spent the last decade and a half redefining its engineering culture as a design-focused company. But a recent scene that played out in court offered a sad rebuke of the narrative—outing Google as either entirely incompetent at design or manipulating in its practice.

The company has been facing a federal jury this week in California, around a class action suit that claims between 2016 and 2024, Google saved and used “pseudonymous” information about its users without consent. Despite customers opting out of data collection on Google services, the suit alleges that Google still accessed their data collected via third party apps. How? Google tapped the data tracked by its own analytics software incorporated inside of these apps. (Google did not respond to a request for comment by time of publication.)

Explained like that, it seems almost obvious that Google could be privy to data. But of course, most users have no idea what analytics software their apps are using, and furthermore, plaintiffs argue that 98 million people opted out of Google having their third party data collected. They believed they were covered, even if there were some other hidden terms and conditions offered by individual apps.

Defending bad behavior

As part of its defense, Google brought in an expert witness—George Washington University professor Donna Hoffman—to argue their case. According to Law360, Google used a technique of “progressive disclosure” so that the most important disclosures were aired to users first. She also stated that Google employed “good UI design” to avoid “cognitive overload.”

Progressive disclosure isn’t an inherently deceptive UX practice—done right, and the technique can reveal more of a service or app’s capabilities in respect to someone’s attention span. You learn more about it, bit by bit, over time. Video games are designed around the very principle. You learn how Mario jumps before you learn how he flies. Hoffman said that it was a technique Google used to satisfy its very different styles users, who interact with legalese in different ways, who Google dubs “skippers, skimmers and readers.”

But Google leveraging progressive disclosure in this instance is bogus—and I offer that analysis as a design expert myself (who is not being financially compensated as an expert witness). There is nothing in terms of design challenges stopping Google from offering a single switch right on their homepage for a user to opt out of every type of potential tracking from Google forever.

That would not overload anyone’s cognition, especially compared to the strange maze of settings and explainer pages that Google uses to index matters of privacy. Plaintiffs even presented evidence that employees inside Google were skeptical that they’d provided proper privacy disclosures to users.

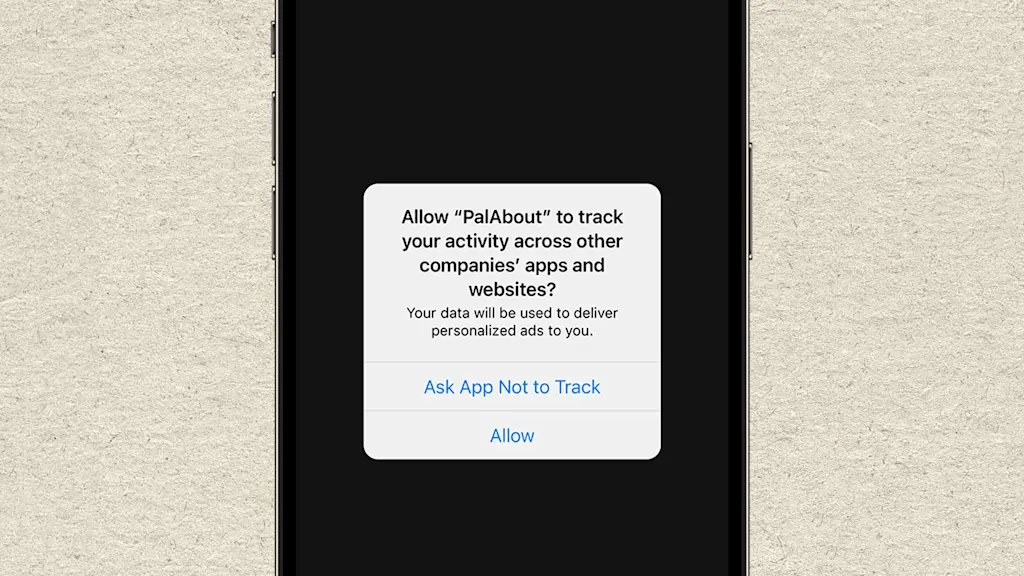

Google is hardly the only bad actor in this space. Apple created the privacy dystopia we live in as much as any other peer. To this day, it offers a terrible UX option to iPhone users, who must “allow” or “ask app not to track” them in a popup…rather than just denying the request on their customers’ behalf. Every time, I worry my thumb will slip and hit the wrong choice, when the anti-consumer option shouldn’t even be presented to me in the first place—let alone from a company that claims to be privacy-first.

If Google loses this case, the payout to consumers could be quite large. Plaintiffs are placing the value of the data at $3 per month. And with 173 million affected customers, that would reach up to $29 billion in compensatory damages.

As companies go even deeper into our lives, offering AI software a direct firehose of our personal data, disclosures around privacy are only going to grow murkier and more complex. Even if plaintiffs win this trial, our privacy feels like a losing battle.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0